Integrate AI chatbots and text-to-speech services into Unreal Engine projects. Supports leading providers including OpenAI, Claude, DeepSeek, Gemini, Grok, Ollama, ElevenLabs, Google Cloud, and Azure - with full Blueprint and C++ support.

The plugin provides a unified API for communicating with external AI providers over HTTPS. Send conversation history and receive streamed or complete responses - for both chat and TTS - all from Blueprint or C++.

Provide credentials for one or more supported providers once at startup

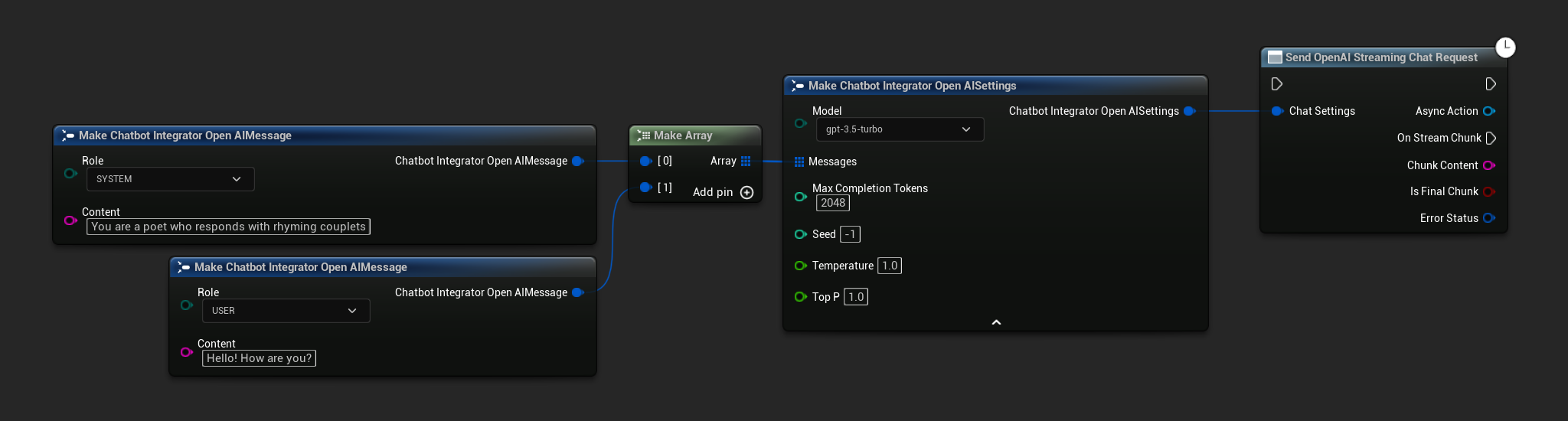

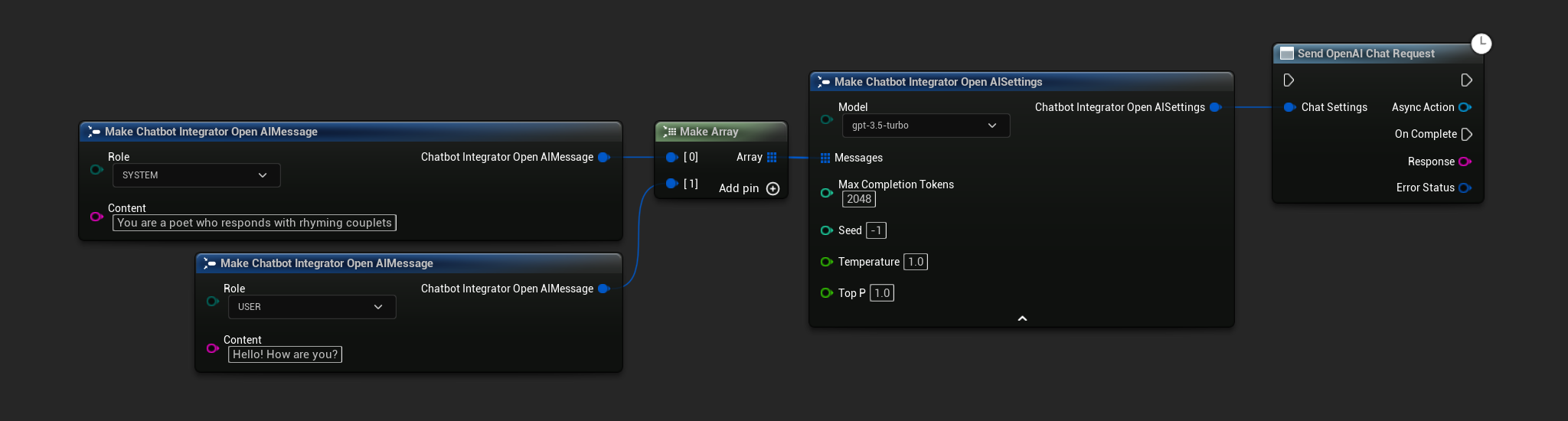

Pass conversation history, model name, and parameters from Blueprint or C++

Receive real-time token chunks or wait for the full response - both modes supported

Bind to result delegates and pipe text or audio into your game logic

Six providers covering the full spectrum of large language models - from cloud-hosted frontier models to locally-run open-source alternatives.

GPT-4o, GPT-5, O1, O3, and the latest reasoning and multimodal models.

Claude Opus, Sonnet, and Haiku - including the latest Claude 4.6 family.

DeepSeek Chat and DeepSeek Reasoner with explicit reasoning output exposed via separate delegates.

Gemini 2.5 Pro / Flash and Grok 3 / 4 - including Grok's reasoning variants.

Any model in the Ollama library - Llama, Mistral, Gemma, Qwen, and more. No API key required.

Four enterprise-grade TTS providers with extensive voice libraries, language coverage, and streaming support.

Natural voices (Alloy, Nova, Shimmer, and more) with streaming support and TTS-1-HD quality.

Expressive, high-fidelity voice synthesis across 70+ languages with Eleven V3 and Turbo models.

Neural and Studio voices from Google Cloud TTS, plus Azure neural voices with emotion and SSML style support. Programmatic voice discovery included for both.

Beyond basic request/response, the plugin provides streaming, reasoning output, request cancellation, voice discovery, and full error handling - all accessible from Blueprints or C++.

Two conversation modes: wait for the complete response, or receive real-time token chunks as they are generated. Both modes are supported across all conversation providers.

Convert AI-generated text to speech with complete audio in a single response, or stream audio chunks in real-time for immediate playback of longer outputs.

Access explicit reasoning traces from models that expose them - DeepSeek Reasoner and Grok reasoning models. Reasoning and final content are delivered via separate delegates.

Cancel any in-flight request at any time - essential for responsive applications that need to interrupt and restart generation mid-stream.

Programmatically query available voices from Google Cloud and Azure TTS at runtime. Filter by language and locale to dynamically select voices in-game.

Detailed error status and message information returned for every request. Handle provider errors, network failures, and rate limits gracefully from Blueprint or C++.

Blueprint Example - Streaming conversation with OpenAI

A Windows demo is available to experience the plugin's capabilities firsthand. The demo covers AI conversations, text-to-speech, and multi-provider switching - with a full Blueprint source project also available for reference.

Test conversations and TTS with different providers side by side

Compare both response modes with live custom input

Full Blueprint implementation in the demo project for reference

Video Tutorial

Runtime AI Chatbot Integrator is the AI intelligence layer of the Georgy Dev plugin suite - combine it with speech recognition, offline TTS, and lip sync to build complete voice-driven AI character pipelines.

Process and play TTS audio output at runtime. Essential companion for handling streaming audio data from any TTS provider.

Learn moreDrive real-time lip sync on MetaHuman and custom characters directly from AI-generated speech output.

Learn moreOffline speech-to-text powered by Whisper - convert player speech to text and feed it directly into the AI chatbot.

Learn moreFully offline voice synthesis with 900+ voices. Complement cloud TTS providers for offline or privacy-sensitive scenarios.

Learn moreGenerate dialogue with on-device LLMs offline, then drive lip sync from the synthesized speech for fully offline character interactions.

Learn moreComprehensive documentation covers all providers, conversation modes, TTS workflows, reasoning model output, voice discovery, and error handling - with Blueprint and C++ examples throughout.

Step-by-step guides for all features, with Blueprint and C++ examples

Active Discord community with developer support

Tailored integration or feature development - [email protected]

Blueprint Example - Sending a conversation request

Available on Fab for UE 5.0 – 5.7. Supports 9 AI providers, streaming responses, reasoning models, and enterprise-grade TTS with 70+ languages.